Researchers at the Massachusetts Institute of Technology (MIT) have developed a groundbreaking framework that enhances tensor programming, enabling it to manage continuous datasets effectively. This innovative approach, known as the continuous tensor abstraction (CTA), allows data to be represented at real-number coordinates, broadening the applicability of tensor programming to fields such as machine learning and scientific computing.

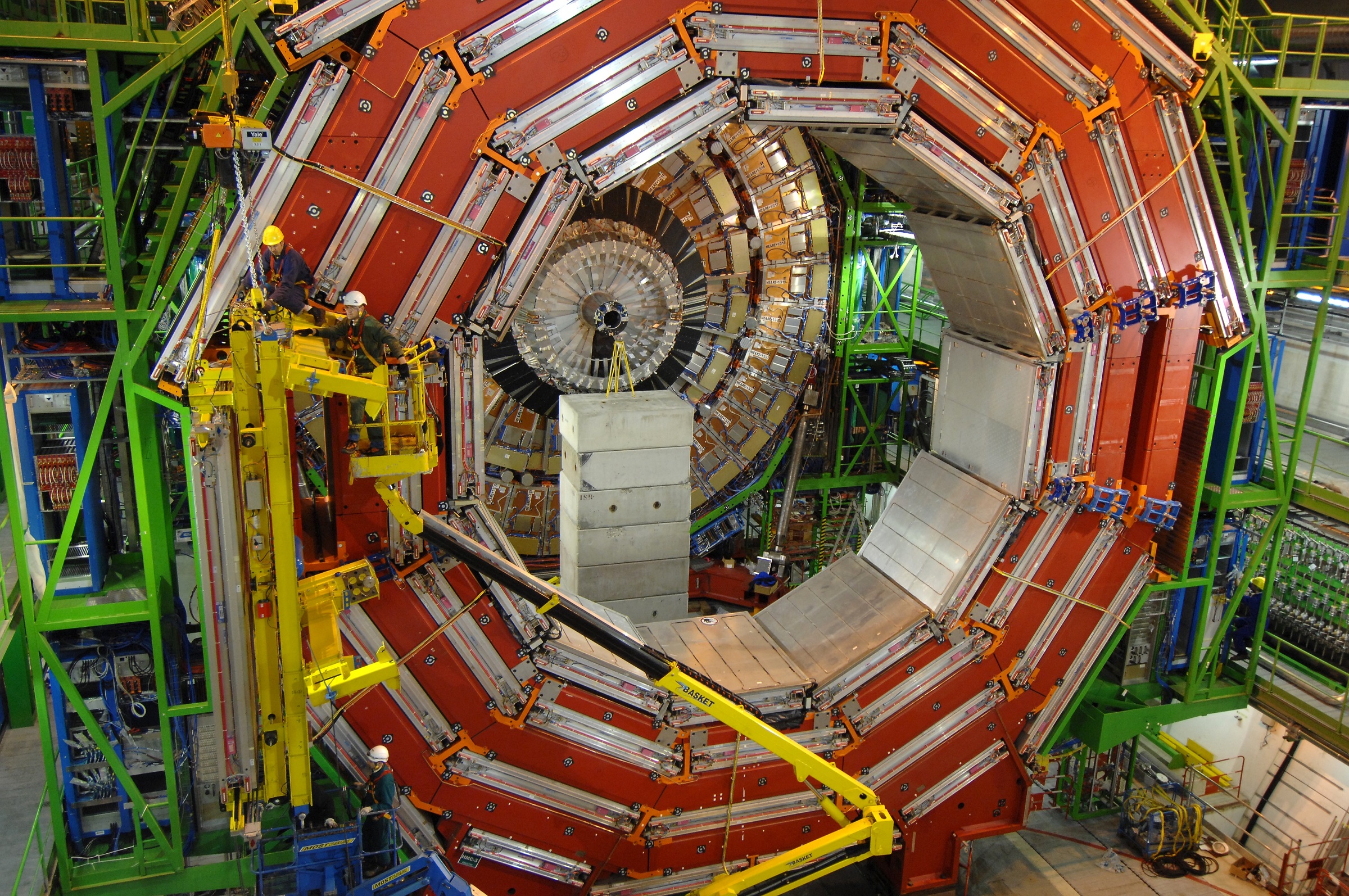

Traditionally, tensor programming has relied on the assumption that data resides on an integer grid. This limitation has proved effective for various applications over the years but presents challenges in handling real-world datasets that do not conform to this structure. Examples include 3D point clouds in sensing technologies, geometric models in computer graphics, and numerical simulations in physics. To address these challenges, MIT’s Computer Science and Artificial Intelligence Laboratory (CSAIL) introduced CTA, which allows programmers to access data using real-number coordinates, such as writing “A[3.14]” instead of just “A[3]”.

Transforming Data Representation

The team overcame inherent challenges in representing continuous data by employing a concept known as piecewise-constant tensors. This method segments continuous space into manageable regions that maintain the same value, facilitating the representation of complex data in a form that hardware can process effectively. As a result, algorithms that once required extensive lines of code can now be expressed succinctly.

According to Saman Amarasinghe, MIT’s Thomas and Gerd Perkins Professor of electrical engineering and computer science, “Programs that took 2,000 lines of code to write can be done in one line with our language.” This shift enables more efficient coding practices across various applications.

Another senior author, Joel Emer, a CSAIL principal investigator and Senior Distinguished Research Scientist at NVIDIA, emphasized the accessibility of the new language: “Continuous Einsums intuitively work the same as the increasingly popular Einsums on tensors defined on regular grids, making the new extension easy for programmers to adopt.”

Performance Improvements and Case Studies

In a series of case studies, the CSAIL researchers demonstrated the practical benefits of CTA. One study focused on geospatial data retrieval, where CTA enabled searches in two-dimensional spaces typical of geographical information systems like Google Maps. In these tests, CTA produced search programs with 62 times fewer lines of code than the Python tool Shapely, while also achieving a ninefold increase in speed for radius searches.

CTA showed even greater efficiency in machine learning applications. For instance, implementing the “Kernel Points Convolution” required over 2,300 lines of code with traditional methods, whereas CTA accomplished the same task in just 23 lines, marking a reduction of 101 times. Additional experiments assessing the framework’s ability to locate features in specific regions of the human genome indicated that CTA could generate code that was 18 times shorter while maintaining comparable speed to existing methods.

The researchers also explored 3D deep learning tasks, particularly in environments where robots must perceive their surroundings. During tests involving the neural radiance field (NeRF), CTA outperformed a similar PyTorch tool by nearly doubling processing speed while reducing code length by approximately 70 lines.

Jaeyeon Won, a Ph.D. student at CSAIL and lead author of the study, highlighted the significance of their work: “Previously, the tensor world and the non-tensor world have largely evolved in isolation, developing their own algorithms and data structures independently.” He added, “Using our language, we discovered that many geometric applications can be expressed concisely and precisely in continuous Einsums.”

The introduction of CTA marks a pivotal advancement in tensor programming. The researchers’ next steps will involve investigating more intricate data structures that utilize variables to represent values within regions, potentially leading to further innovations in deep learning and computer graphics. By bridging the gap between tensor and non-tensor methodologies, this research sets the stage for exciting developments in scientific visualization and complex applications.